AI, or rather, the news of AI is taking over the headlines. We heard about how AI beat the best of the human players at GO in a very short span of time, we heard about tech and science celebrities like Elon Musk , Stephen Hawking , Mark Tegmark and Yuval Noah Harari warned us about AI Armageddon; singularity is near, and maybe even within our generation. Strong AI– robot that really mimic human– is coming to town, so be prepared! You might lose your job, or worse, you might lose your freedom, end up being a slave to the legion of robots!

Not so fast! According to Judea Pearl, one of the researchers who pioneered the Bayesian network and the probabilistic approach to AI. What AI is doing now is just plain curve fitting, which means that it can only detect correlation between data. The fact that AI can conquer chess, GO, design medicines at molecular levels, drive cars, pretend to be human customer service… all these just prove that the range of domains that are suitable to curve fitting is wider than we initially expected.

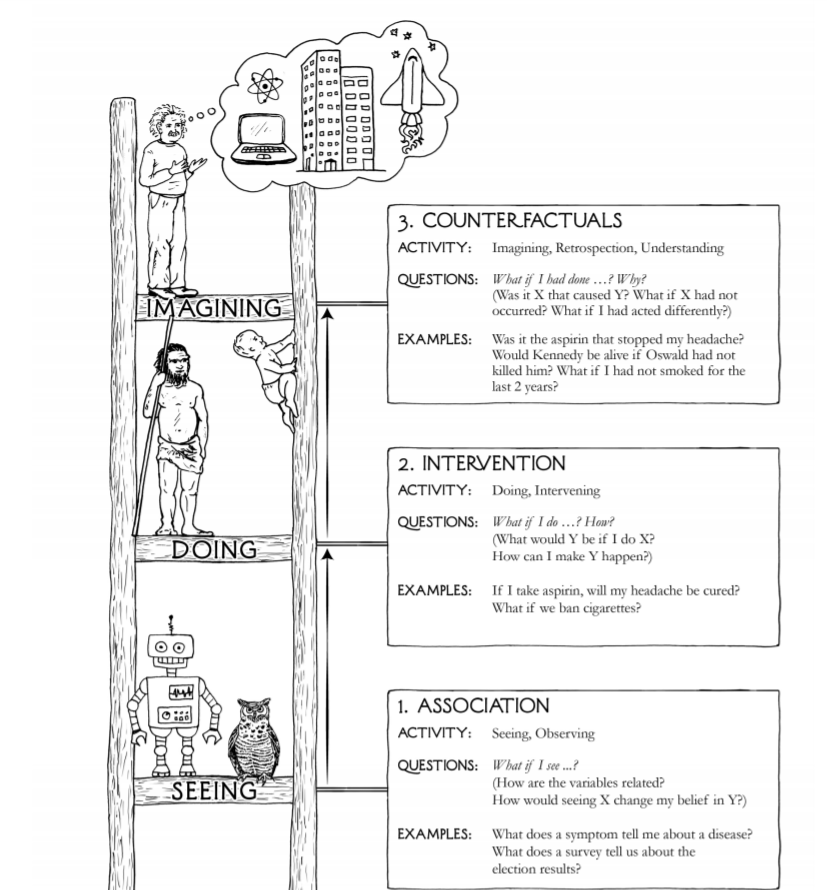

It doesn’t mean that the days when AI can approximate human intelligence is near, because out of three levels of cognitive ability: seeing ( association), doing ( intervention) and imagining ( counterfactuals), AI is only good at the lowest level, namely, seeing.

As a geek with long time interest in any scientific subject, I have followed the AI debate closely. Like many, I’ve been exposed to the argument why AI Armageddon is imminent ( basically the argument boils down to “since AI is advancing exponentially, and anything exponential is bound to happen pretty soon, so AI takeover is imminent“), so I am interested to explore opposite opinion. Judea Pearl, is one of the skeptics, and this is how I come across his latest book, The Book of Why.

Actually, besides discussing about AI, the book also discusses about how to establish cause and effect of relating events. It discusses how we can use modern statistical tools, like using Directed Acyclic Graph(DAG) to establish a model between potential causes and effects, and then use statistical data to prove or disprove the relationship, much like how we first postulate Newton Law of Gravity and then use it to prove or disprove Kepler’s three law.

One example that the book gives is the Smoking-Causes-Cancer debate. We all now know that smoking does cause cancer, but back in the early years of cigarette/tobacco industry, this was far from obvious. Yes, people did witness a sharp rise in lung cancer right after the tobacco was introduced, but correlation doesn’t mean causation, as every student in statistics knows. Tobacco came at a time when western countries were undergoing rapid urbanization and industrialization, and perhaps it’s the industrialization that was really causing the lung cancer? Or what about some genes lurking in the body of the patients, that cause the cancer and the preponderance for them to pick up cigarettes? In both scenarios, we cannot really say that it’s the smoking that causes the cancer!

To get answers to this question, it’s necessary to use the bayesian models and statistical tools introduced in the book to prove or disprove those hypothesis. Thanks to the effort of Professor Judea Pearl and other researchers, we can establish firmly the causal relationship between different events. So now, the smokers have no excuse to continue smoking, the truth is plain for all to see!

So this is what Judea Pearl is trying to tell us: we human can easily imagine models that chain events together in a series of cause-effect relationship, but AI can’t. Human can ask “If I do this, will that change the outcome?”, and then take steps to intervene, and then observe and compare the outcomes; human can also ask counterfactuals questions, like “if I haven’t been doing this, will I still be able to get Oxford scholarship” and then proceed to answer the question. But AI can’t do that. The inability of AI to do so, is the biggest reason why AI will not be a serious threat to human survival, at least for now.

To put it the other way, until AI can start to reason like human, and ask counterfactual questions, there is no reason to worry about AI Armageddon. So, drink and be merry! We won’t die tomorrow.

Is Judea Pearl’s argument persuasive? Not entirely to me. Despite the impressive sounding computational power AI has, it still dwarves in comparison with our brain structure. A typical human brain has a minimum 100 trillion neural connections, which is at least 1000 times the number of stars in our galaxy. By contrast, the biggest feedforward Neural Network that has ever been trained is only… 100 billion connection weight, and then we already run into the problem of memory space. Not only that, researchers were not getting any interesting results simply because no one knows how human brain is wired.

But, what is stopping a computer from designing itself to mimic our brain structure by using evolutionary algorithms or deep neural networks? I can certainly imagine a scenario whereby a computer would “learn” how to set an objective function to maximize its own smartness, leading to more and more complex and multifunctional brains over thousands of generations. I’m not saying that this is possible, but the book and the AI Armageddon skeptics don’t present an argument on why this would be impossible either.

So who knows?